A few days ago I attended a fireside chat featuring Edwin Chen from Surge AI held at South Park Commons. One of the items he highlighted was how excited he was for RL Environments to better train and evalute frontier models. This caps off a journey that started a few weeks ago when Kasey Zhang and Osmosis hosted the RL IRL forum at Y Combinator which also coincided with an Unsloth/Pytorch/AMD hackathon that featured creating RL environments for Meta’s OpenEnv project.

RL IRL @Y Combinator

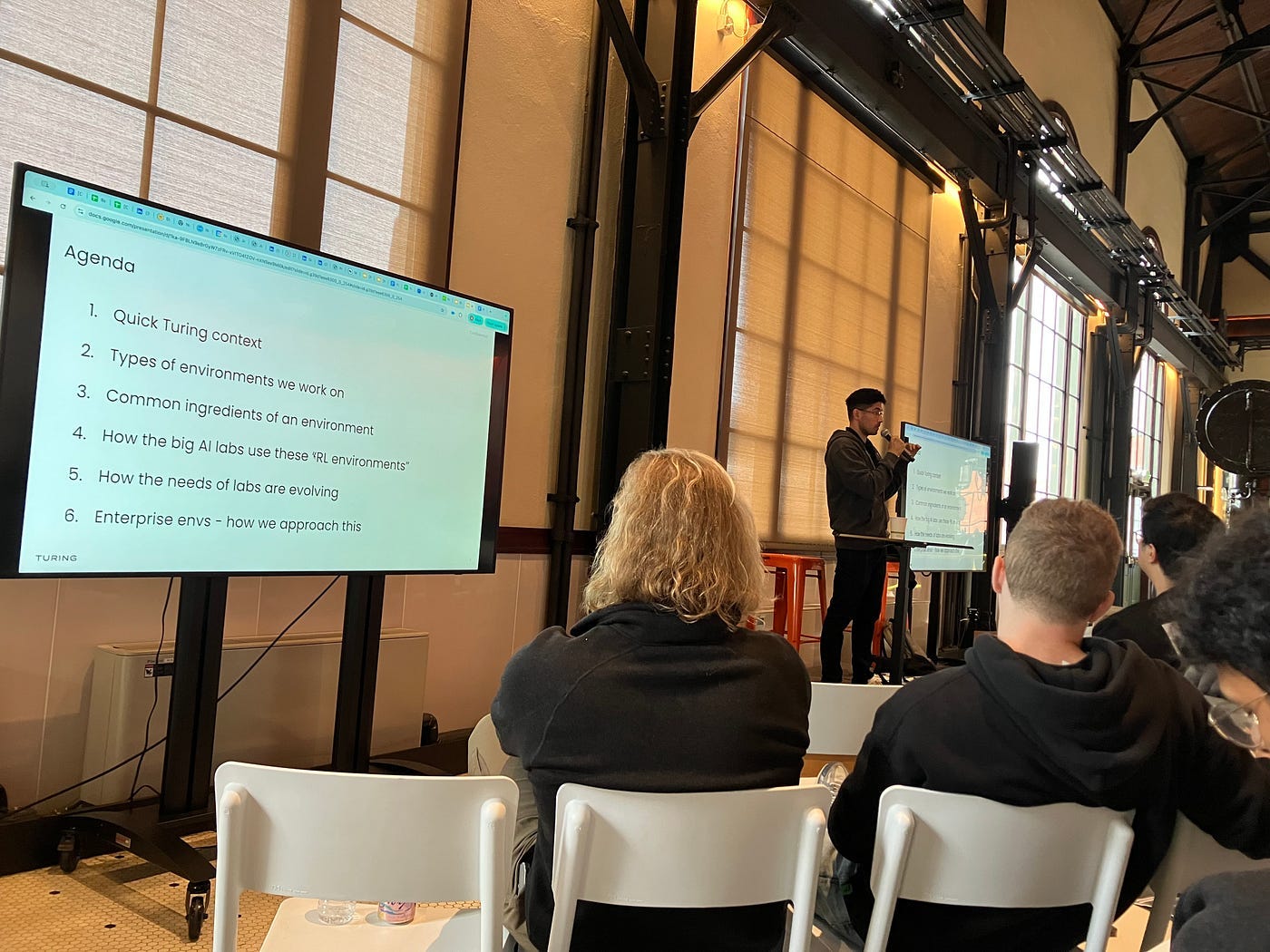

Kasey Zhang and Osmosis hosted a forum for applied RL where six talks were held over the course of the afternoon. The summary is written here as well as the recording of some of the talks.

For this post I’d like to highlight the talk by Anshul Bhagi where he gives an overview of Turing’s work in creating Reinforcement Learning (RL) Environments for Enterprise Workflows. Turing focuses on building sophisticated environments and generating high-quality data to help frontier labs and enterprises evaluate and train advanced AI models, particularly for complex, long-range tasks in domains like software engineering, finance, and healthcare. The core of their business involves developing three main types of environments: those for evaluating and training coding agents, fully interactive UI environments that mimic applications, and general purpose function calling environments often based on MCP servers. A significant challenge and key value proposition for Turing is partnering with domain experts to design tasks and data, ensuring the environments accurately reflect high economic value workflows and provide well-calibrated difficulty for model improvement. Finally, the environments are primarily used by customers for model evaluation, generating Supervised Fine-Tuning (SFT) data, and RL training, with a trend towards increasingly complex, hybrid, multi-application workflows.

Contributing RL Environments to OpenEnv

Around the same week I participated in a hackathon sponsored by Unsloth, Pytorch, and AMD. The original challenge was to train agents to pose and answer questions and stump the opponent. The hackathon was moved ahead a week with the additional challenge of creating new environments for the OpenEnv project.

I had a great time creating environments and the OpenEnv project made it easy with a simple interface. My two environments helped agents train to recognize stock trading patterns and play the game of Mastermind while the winners went above and beyond in terms of creativity and implementation:

3rd place:

@sub_zero5167 and @Zeus for a Survival Island game:

2nd place:

@cpich3g and @dexter for PacMan: https://github.com/cpich3g/pacman-rl

1st place:

@osiris for GRPO on Julia, Ruby, Zig RL environments: https://medium.com/@yogeshsingla481/training-a-multi-language-3b-coder-lm-with-reinforcement-learning-grpo-f37577d5e5f7

Edwin Chen @South Park Commons

Finally, I attended a talk with Edwin Chen of Surge AI hosted by South Park Commons. The fireside chat delved into Edwin’s past, the founding and philosophy behind what Surge AI does, and the company’s deliberate choice to remain entirely bootstrapped, valuing long-term goals and product quality over short-term venture capital metrics.

However, what piqued my attention was his answer to what excites him these days, which was RL Environments. This is emphasized by a recent blog by their research team. It’s a really dense read, but well worth it. In it the team explores how realistic reinforcement learning environments reveal gaps in AI’s ability to act as autonomous agents. In a simulated company called Corecraft Inc., nine leading models — including GPT-5 and Claude Sonnet 4.5 — attempted 150 workplace tasks. Despite their conversational fluency, top models failed over 40% of the time, showing limits in practical reasoning and real-world execution.

Surge then defines a Hierarchy of Agentic Capabilities based on their learnings:

Tool use and planning — decomposing goals and executing steps.

Adaptability — adjusting to unexpected inputs or changing conditions.

Groundedness — staying factual and contextually consistent.

Common-sense reasoning — inferring correctly beyond training data.

While models perform basic planning well, they struggle with adaptability and reasoning. The blog concludes that the future of AI lies in environments that replicate real-world work to cultivate truly capable, grounded agents.

Summary

There you have it — a quick tour of the RL Environment landscape encapsulated by three events over the course of a month. Hope the links help and happy reading!